Two years ago, I wrote that AI will not replace software engineers. I still believe that. But I was wrong about something important — I framed it as a binary. Replace or don’t replace. Survive or don’t survive. That framing missed the point entirely.

What’s actually happening is a repricing. A massive, silent, irreversible repricing of what human work is worth.

Before we dive in, try this: Think of five things you do at work that no one ever taught you explicitly — things you just know from years of experience.

Just grab a pen and come back. 🖊️

The cost of thinking just crashed

Here’s a number that should keep you up at night. The cost to process a million words with AI dropped from ~$75 to ~$1.25 in roughly a year. That’s a 98% reduction.

Now think about what cognitive labor actually is. It’s the stuff you do on a laptop. Research, analysis, summarization, first drafts, code generation, data extraction, scrolling, Ctrl+C and Ctrl+V. Every single one of those tasks is dropping in cost so fast that “cheap” barely covers it.

When thinking becomes cheap, the thinkers don’t disappear — their price tags change.

The signs are everywhere. Companies are already reporting that a single AI agent can handle workflows that used to require entire analyst teams. Not because the agent is smarter — because it costs pennies per hour and doesn’t need a lunch break.

But here’s the thing most people miss. Cost collapse isn’t destruction. It’s redistribution. Every skill AI devalues creates explosive demand for something it can’t touch.

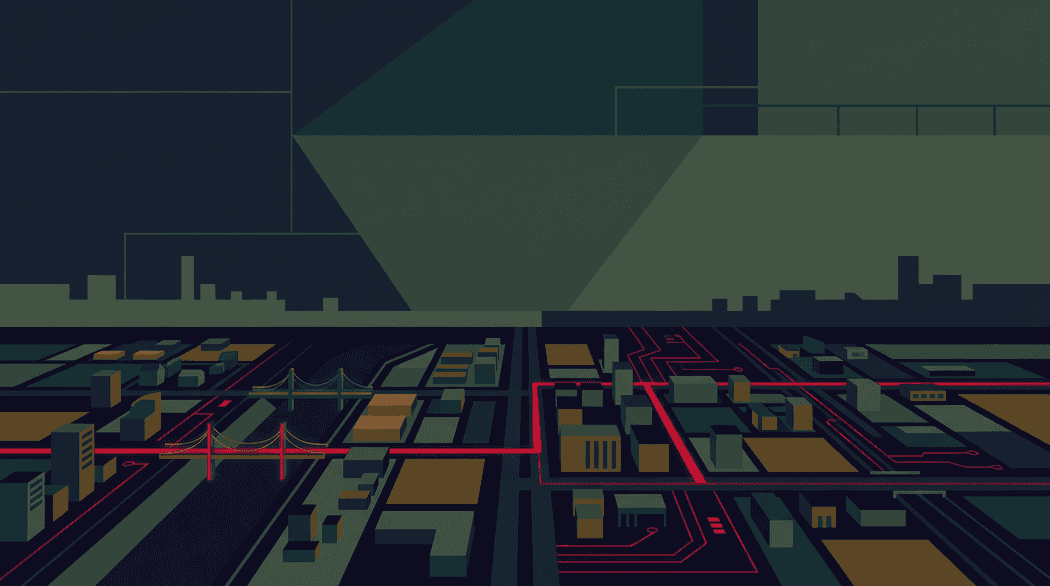

Immersive Background: A 98% drop in the cost of cognitive labor in just one year.

Immersive Background: A 98% drop in the cost of cognitive labor in just one year.

What becomes rare when thinking becomes cheap

For generations, the advice was simple: get educated, specialize, become the expert. The more specialized your cognitive labor, the more you earned. That equation held for decades.

That playbook is now obsolete.

Specialized theoretical knowledge — the kind that lives in textbooks, databases, and documentation — is exactly what LLMs eat for breakfast. If your expertise can be Googled, it can be generated. If it can be generated, its market price is approaching zero.

And that would be bad enough on its own. But here’s what makes it worse — most of us weren’t even stretching that expertise in the first place.

Somewhere along the way, a small number of engineers (most of us) stopped thinking and started executing. We became blind executors — taking tickets off a board, translating specs into code, shipping what we were told to ship without ever questioning whether it was the right thing to build. What’s not in the code, it’s not our job. What’s not JavaScript, it’s not our job. What’s not in that particular framework or technology we are using (or we want to use), it’s not our job.

Those days are gone, my friend. That comfort zone just got deleted. AI executes now. Faster, cheaper, and without complaining about the sprint scope.

The Remote Labor Index (a benchmark by Scale AI and the Center for AI Safety) tested frontier AI agents on 240 real freelance projects from Upwork. The best agent completed just 2.5% of projects at a quality a paying client would accept. Meanwhile, OpenAI’s own GDPval benchmark shows the same models approaching expert-level quality when all context is pre-packaged.

Both numbers are real. The difference? GDPval provides everything — the brief, the format, the success criteria. The Remote Labor Index hands the agent a client brief and says figure it out. That’s the gap between tasks and jobs. And it’s the gap that defines your career’s future value.

I’ve seen this “context gap” before in a completely different place: my YouTube channel.

When I was working on my YouTube videos, I hired an editor to publish content faster. His first attempt needed a few minor adjustments (a complete redo) — not because he wasn’t talented, but because I wanted something specific. I’m annoyingly detailed about certain things. I already had a style. A pacing. A rhythm. The little cuts I like. The parts I want to linger on. The parts I want to murder instantly. How the b-rolls would appear, and when. What the different lower thirds mean. What colors and shapes we should use, and how to animate them. Uff, a lot of stuff, trust me. This guy is a hero!

So I had to do the unsexy work: explain what “good” looks like in my world.

That’s the same story with AI. AI doesn’t need “talent” from you. It needs context — because it cannot enter your brain… yet.

And yes, maybe one day we’ll reach a point where you don’t need to explain anything. But until then, the winners are the people who can translate taste into instructions.

So what’s left for us to do? Everything that matters.

And that word — taste — is doing more work than it looks. Taste isn’t preference. It’s accumulated judgment. It’s the reason a senior engineer looks at a technically correct solution and says “no” without being able to fully explain why for another ten minutes. It’s the reason two engineers can receive the same prompt and produce wildly different results — not because one knows more, but because one knows what to want.

This is what actually separates senior engineers from junior ones. It was never about knowing more syntax or memorizing more patterns. It was always about taste — the ability to look at ten valid options and feel which one is right for this system, at this moment, given everything you’ve absorbed that you can’t quite put into words. Juniors can build what you describe. Seniors know what’s worth describing.

And I don’t know about you, my friend, but when I hire for my team I look for taste over raw execution speed. Because the person who has taste will eventually learn how to build things. The person who only knows how to build — but not what’s worth building — will spend years catching up to what taste gives you on day one.

And now that AI handles the building, that distinction isn’t subtle anymore. It’s the whole game.

Because the flip has an upside that nobody’s talking about enough. Everything AI can’t do effortlessly? That’s experiencing an unprecedented spike in value.

We’re not witnessing the death of human value. We’re witnessing the biggest reallocation of it in history — and it rewards the things we should have been doing all along.

But how do you build taste? Well, my friend, just keep reading. The rest of this article is, in many ways, the answer.

The demand nobody sees coming

Here’s something that should reframe the entire “AI is killing jobs” narrative.

Every single time the cost of computing has crashed — mainframes to PCs, PCs to cloud, cloud to serverless — the total amount of software produced didn’t shrink. It exploded. New categories that were economically impossible at the old price point became viable, then ubiquitous, then essential. SaaS didn’t exist before cloud. Mobile apps didn’t exist before smartphones. These weren’t cheaper versions of old software. They were entirely new markets.

This is the Jevons paradox in action. When a resource gets dramatically cheaper, demand doesn’t just hold steady — it grows faster than the cost drops.

And we’re about to see it again. At industrial scale.

We have never found a ceiling on the demand for software. And we have never found a ceiling on the demand for intelligence.

Think about all the companies that need software but can’t afford it. Regional hospitals. Mid-market manufacturers. Family logistics companies. A custom inventory system costs $500K+. A patient portal integration costs $300K+. These businesses make do with spreadsheets and manual processes because the economics never worked.

AI is dropping software production costs by 10× or more. Suddenly, that $500K system becomes a $50K system. Entire industries that were locked out of custom software are about to get unlocked.

The biggest opportunity isn’t making existing software cheaper. It’s building for markets that never existed before.

Immersive Background: Framing the right question is more valuable than finding the right answer.

Immersive Background: Framing the right question is more valuable than finding the right answer.

Frame the question, don’t find the answer

Let me ask you something. When was the last time you were paid to find an answer?

Because AI can find answers now. It can summarize research papers, debug code, analyze datasets, and draft reports faster than any human. The answer-finding business is over.

But here’s the thing. Even the most advanced agentic tools — systems like OpenClaw that can spin up a CEO agent, a CTO agent, a product owner agent, and have them debate priorities autonomously — still operate inside a boundary someone else drew. They can decompose a goal into sub-questions brilliantly. They can decide what to investigate next. But they can’t decide whether the goal itself is worth pursuing. They can’t feel that the market shifted last Tuesday because three clients canceled for reasons that aren’t in any dashboard. They can’t know that your cofounder is burning out and the ambitious roadmap needs to shrink, not grow. The meta-question — should we even be playing this game? — still requires someone with brain cells in the game.

The person who frames the right question captures more value than the person who finds the right answer.

Think about it. AI can generate fifty analyses in seconds. But only a human with real domain context knows which analysis actually matters. Only a human can look at a messy, ambiguous business situation and say, “Wait — we’re solving the wrong problem.”

This is what I call the problem-framing multiplier. As solution costs approach zero, the ability to define the right problem becomes the most valuable thing you can do.

I see this constantly in my own work as a software architect. The technical decisions that matter most aren’t about how to build something — AI handles that increasingly well. They’re about what to build and why. The framing. The judgment call. The constraint nobody else saw.

No prompt engineering trick gives you that. It accumulates through years of building, shipping, debugging production at 2am, and learning from systems that broke in ways you didn’t expect.

But here’s what changed my own relationship with AI: I stopped treating it as a tool and started treating it as a thinking partner. Not a replacement for my judgment — a sparring partner for it. I throw half-formed ideas at it. I ask it to poke holes in my architecture. I use it to stress-test assumptions I’d otherwise carry unchecked into production.

AI isn’t the engineer. It’s the engineer’s thinking partner. The best hack is having someone who never gets tired of asking “what if?”

The engineers who are struggling right now are the ones using AI to skip thinking. The ones thriving are using it to think harder — to explore more options, challenge more assumptions, and arrive at better decisions faster. Same tool, opposite mindset.

The coordination layer is dissolving

Let me tell you about something I’ve been watching closely, and it’s genuinely unsettling if you think about it.

For decades, software engineers communicated through code, through tickets, through carefully worded Slack messages — anything to avoid opening our mouths. Noise-cancelling headsets on. Heads down. Do not disturb. Every organizational structure in software — standups, sprint planning, code review ceremonies, QA teams, release management — exists because humans building software in teams need coordination. We need to sync. We need to unblock each other. We need to communicate context that would otherwise be lost.

But when AI handles implementation? These coordination structures don’t become optional. They become friction. The keyboard was our last wall. And this year, we tore it down.

I recently studied how StrongDM runs what they call a Software Factory — or as it’s more widely known, a Dark Factory — a software operation where three engineers, not thirty, produce enterprise-grade software. No sprints. No standups. No Jira board. They write specifications and evaluate outcomes. That’s it.

The entire coordination layer that constitutes the “operating system” of a modern software org simply does not exist. Not as a cost-saving measure — but because it no longer serves a purpose.

And it’s not just startups. Spotify told Wall Street analysts during their Q4 2025 earnings call that the company’s best developers “have not written a single line of code since December.” They use an internal system called Honk — powered by Claude Code — where, for example, an engineer on their morning commute can describe a bug fix from Slack on their phone, get a new build pushed back to Slack, and merge to production before they arrive at the office. Fifty-plus features shipped in 2025. The coordination layer didn’t get optimized. It got deleted.

When AI does the building, the value shifts from coordination to articulation.

The numbers are staggering. Traditional SaaS averages about $600K revenue per employee. Top AI-native startups like Cursor and Midjourney? $3M+ per employee. That’s a 5× gap, explained almost entirely by the deletion of coordination overhead.

This doesn’t mean all management becomes obsolete. But it means the job of management fundamentally changes. Instead of “coordinate the team building the feature,” it becomes “define the specification clearly enough that autonomous agents build the feature.” That’s a completely different skill.

And honestly? Most organizations are nowhere near ready for that shift.

The engineers who thrive aren’t just the ones who can frame the right problem. They’re the ones who can say it clearly enough that a machine — and a human — can act on it.

But some are already naming it. There’s an emerging role called the Forward Deployed Engineer — an engineer embedded in the client’s problem domain, not sitting in a back office translating tickets into code. And “client” here doesn’t mean you work at a consultancy. Your client is whoever owns the business problem — a product manager, a department head, your tech lead, the end users your team serves.

The FDE doesn’t code. Doesn’t attend standups. Doesn’t manage sprints. Doesn’t review pull requests. What they do is sit at the client’s table, absorb the domain until they understand it better than the client can articulate, and turn that understanding into specifications so precise that autonomous agents can build from them without a single clarifying question. Then they verify the outcome. That’s the whole job.

The title is deliberate. “Forward Deployed” means at the client’s table. “Engineer” means rigorous discipline — not consulting, not project management. And the skill that matters most? Domain analysis. Understanding a client’s SEPA payment settlement windows and dual-approval thresholds and legacy MQ integrations at operational depth. The kind of knowledge AI cannot generate because it was never in a training set. It has to be earned through immersion.

The FDE isn’t a future fantasy. It’s the logical endpoint of every trend I describe in this article. When AI handles implementation, the human who owns the business problem end-to-end becomes the most valuable person in the room.

Read that description again. The FDE isn’t a software engineer. Isn’t a business analyst. Isn’t a solution architect. Isn’t a domain consultant. They’re all of those things at once — because AI just collapsed the implementation layer that used to justify keeping them separate. The old model needed five specialists because building was slow. When building is instant, you don’t need five people to understand the problem from five angles. You need* fewer* people — but each one needs to understand their slice of the problem from every angle. Complex systems don’t shrink to one person. They shrink from thirty specialists to a small team of FDEs, each owning a domain end-to-end.

But here’s what that role makes obvious: the most valuable skill in that description isn’t domain knowledge. It’s the ability to communicate it. Translating messy human business context into precise, machine-executable specifications — and then translating AI output back into human decisions — requires a communication muscle most engineers never had to build.

We used to live in the engine room. The business side spoke to product managers, product managers spoke to tech leads, tech leads spoke to us. Communication was optional — layers of translation insulated us from having to articulate anything directly. That was fine, because our value was in the execution. And AI just took the execution.

We don’t write code anymore. We chat with our AI agents. We talk to them. We dictate to them in any possible way. The keyboard was our last wall — and we tore it down.

The insulation is gone. When you’re the one bridging human intent and AI output, there’s nobody left to translate for you. And it turns out the skill required to write a specification precise enough for a machine is the same skill required to run a good meeting, send an unambiguous message, or convince a room. Engineers who spent years avoiding communication are now practicing it daily — through their tools. The ones who take that seriously are gaining ground fast.

Your body knows things vector databases don’t

Here’s my favorite insight from all of this; the most AI-proof knowledge you have isn’t in your head. It’s in your body.

There’s a kind of knowledge that lives in muscle memory, intuition, and lived experience — not in any document or database. AI literally cannot absorb it. Because it was never written down.

Think about a senior engineer who opens a pull request and immediately knows something is off — not from the diff (they haven’t read it yet), but from years of reading how systems break. Sometimes the title alone is enough — you already know what you’ll find before you read a single line. Or a tech lead who senses the sprint is going sideways on Monday morning, before the standups, before the blockers are logged, before anyone says a word. No Jira query told them. Their nervous system did. These aren’t mystical talents. They’re pattern recognition built through thousands of embodied repetitions that never made it into a training set.

AI learns from text. Your deepest expertise lives in the gap between what you do and what you can articulate.

LLMs can’t train on what was never recorded. They can’t scrape intuition. They can’t fine-tune on the feeling you get when a codebase is about to get spaghettified, or when a client meeting isn’t fruitful, or when an architecture direction “smells” wrong even though it passes every checklist.

As AI commoditizes explicit knowledge — facts, procedures, analysis — the premium on that unwritten expertise increases. The surgeon, the craftsperson, the seasoned engineer — their value rises precisely because AI makes everything else cheaper.

Think about the last time you knew something felt wrong before you could explain why. That’s your edge. That’s your competitive advantage.

But having that experience alone isn’t enough anymore. Having it is one thing. Making it usable is another.

Experience is an asset. Encoded experience is a weapon.

The real shift is from holding context in your head to encoding it — into evaluations, guardrails, documentation, and constraints that AI agents can actually follow. It’s not enough to know which infrastructure is production and which is temporary. You have to write that down in a way that prevents an agent from demolishing the wrong one. It’s not enough to remember the unwritten vendor terms negotiated over dinner three years ago. You have to build that into the contract review workflow before the agent drafts something catastrophic.

The engineers who’ve figured this out are building what amount to skills for their AI agents — structured instructions that encode the judgment calls, edge cases, and domain constraints the agent needs but can’t infer on its own. Not documentation for humans. Documentation for machines. Think of it as the difference between a wiki article about your deployment process and a guardrail that prevents your agent from deploying to production on a Friday. One informs. The other constrains. And in a world where agents act autonomously, constraints are worth more than information.

In every case, the agent does the task well. The human ensures it’s the right task, done the right way, at this moment.

And there’s a second layer to this. It’s not just about the depth of what you know. It’s about the width of what you’ve experienced.

Go sideways

The engineers I find most interesting right now aren’t the ones who went deeper into their specialty. They’re the ones who went sideways. The one who plays jazz — and brings a musician’s intuition for when to improvise and when to follow the score into their architecture decisions. Another does landscape photography — and applies a photographer’s eye for negative space and composition to their UI reviews.

This sounds like the kind of thing you put on a LinkedIn bio to sound interesting. But there’s something real underneath it.

AI was trained on the internet. The internet doesn’t contain what your hobbies taught you.

Every domain you absorb outside of software gives you a set of mental models that no language model has indexed. The principles of jazz improvisation aren’t in any prompt engineering guide. But they transfer — and when they do, they produce connections that feel like intuition but are actually cross-domain pattern recognition built over years.

Narrow specialists are fragile right now. Depth alone gives you one lens, and AI already has that covered. The multifaceted engineer — the one who reads widely, builds things with their hands, takes up hobbies that seem completely irrelevant — is developing an input surface that no model can replicate. Because none of it was ever in the training data.

Some things I’ll never forget

Let me take you back to Thessaloniki, early 2000s. I was a teenager building websites — and I was doing it the hard way.

I’d design the entire site in Corel Draw (think Illustrator, but for people who couldn’t afford Illustrator). Then I’d slice the design into individual image pieces, one by one. Then I’d optimize every single piece for 56K modem speeds — because if your page took more than ten seconds to load, your visitor was gone. Then I’d upload each file manually to the hosting server. Then update the HTML and CSS by hand. Then publish. Then test. Then find a bug and do it all over again.

And there was a twist. Every time I wanted to upload files, I had to connect to the Internet. Dial-up. By the minute. My family’s phone line — which meant that while I was online, nobody could call in or out. My mom couldn’t make a phone call. So I had to time my sessions carefully, upload fast, disconnect, and pray nothing failed. If I stayed online too long, the bill would spike and my parents would cut my access. So I bought prepaid Internet cards with my own pocket money — money that other kids spent on movies, drinks, going out. I was the nerd staying home on a Saturday night, spending his budget on bandwidth.

Seriously — who does this just to write a blog post about Grim Fandango?

I’ll tell you who. Someone who do care about the details.

There’s a sentence I wrote years ago that I keep coming back to — you’ll find it on the homepage of the website you’re reading right now. That wasn’t a design principle. It was a confession. I couldn’t ship something that wasn’t right. The easy path existed. I didn’t want easy. I wanted to understand every layer — because that’s how you build the instinct to know which layer matters and which one doesn’t.

AI can generate output. It cannot generate the years of navigating complexity that teach you where to look.

And here’s the twist nobody expected. That same obsessive detail work — the pixel-slicing, the modem-timing, the manual uploads — trained a muscle that now operates at an entirely different altitude. Software engineers have spent careers dealing with complexity that would take the average person months to parse. We don’t need months. We’ve been doing this our whole lives. And now? We don’t have to do it alone. We have teams of AI agents handling the details we used to drown in — freeing us to operate at the level where all that accumulated instinct actually pays off.

It’s not just unwritten knowledge. It’s not just experience. It’s the training — years of navigating complexity so dense that it became second nature. The intrinsic drive to touch the metal, to go one layer deeper, to refuse “good enough” when your gut says it isn’t. That drive didn’t make us perfectionists. It made us fast learners of hard things. And now that AI handles the details, that muscle doesn’t atrophy. It scales.

You can’t prompt-engineer that instinct. You can’t fine-tune it into a model. It accumulates through years of choosing the hard path — not because someone told you to, but because you couldn’t live with the alternative. Every engineer I know who’s thriving right now has some version of this story. The details differ. The instinct is the same.

So when people ask me if I’m afraid of the AI revolution, my honest answer is no. Not because I’m smarter than the models. Because I know I’ll navigate whatever level of complexity it takes to get things done the way they need to be done — and I’ll do it for the same reason I spent my pocket money on prepaid Internet cards, 26 years ago, instead of going to the movies.

Because I care about the craft. And now I have a team of agents that lets me care at a scale I never could before.

Don’t let AI kill your vibe—vibe code with it to amplify your craft!

We need better engineers — and yes, that includes juniors

Somebody is trying to sell the idea that engineers will be gone in some years from now. Here’s what I believe, and everything in this article leads to it: we need better engineers than we’ve ever needed before. Not fewer. Better.

This sounds counterintuitive when every other headline screams about developer layoffs. But think about what’s actually happening. AI automated the easy parts. The code generation, the boilerplate, the conflict resolution, the pattern matching. What’s left is the hard stuff — systems thinking, specification writing, customer intuition, judgment under ambiguity.

Alibaba’s SWE-CI benchmark tested what happens when AI doesn’t just write code but maintains it over time. 75% of frontier models broke previously working features. Code that passed every test on day one compounded into technical debt by month three.

Writing code and maintaining code are fundamentally different skills. AI is excellent at the former and catastrophically bad at the latter. The narrative isn’t “AI replaces developers” — it’s “AI generates code that humans must maintain.”

The bar rose for everyone. “Adequate” is no longer a viable career position — because adequate is exactly what the models do.

And the industry is shifting from rewarding specialists to rewarding generalists. A specialist who optimized your database queries to sub-millisecond latency but never asked whether anyone actually uses that endpoint? A specialist who can write a flawless payment microservice but has no idea why customers are abandoning checkout? A specialist who perfected your CI/CD pipeline but can’t explain to a stakeholder why Friday’s release should be delayed? All way less valuable than a generalist who understands systems, users, and business constraints across domains. When AI handles the building, the human who sees the whole board wins.

Now, here’s a twist nobody expected; some of the smartest companies aren’t just raising the bar — they’re also hiring juniors. Specifically, AI-native juniors.

These are developers who grew up with ChatGPT. They think in prompts. They can pick up an unfamiliar problem and solve it in minutes using AI across multiple tech stacks. For established teams where senior engineers feel the need to manually override every AI-generated line in the code, these AI-native juniors act as a cultural catalyst — demonstrating new workflows by doing, not by evangelizing.

Juniors review and direct AI output, learning to spot what’s correct versus subtly wrong, while seniors provide the domain context and guardrails.

The talent pipeline isn’t dead. It’s being rebuilt from the ground up.

The key distinction? AI-native doesn’t mean AI-dependent. The valuable junior is the one who uses AI as a multiplier for learning — not as a substitute for understanding. The difference is whether they can explain why the AI-generated code works, not just that it works.

One more thing that can’t be generated

As AI gets better at producing polished, convincing output — well-structured arguments, confident recommendations, perfectly framed proposals — something quietly happens to the professional landscape. All the surface signals of competence get cheap. Anyone can look reliable with the right prompt.

Which means the real thing becomes rare.

Think about who you actually trust at work. Not the person with the best output — the person who does what they say they’ll do. Who tells you when they’re wrong before it blows up and become costly. Who cares about the right outcome, not how they look.

AI can generate a polished deliverable. It can’t generate a track record of telling the truth when the truth is inconvenient. It can help you sound right. It can’t make you act professionally, and then own it when you’re not.

When everyone can perform trustworthiness, authenticity becomes the scarcest professional asset of all.

This isn’t a soft, feel-good point. It’s an economic one. As AI raises the ambient quality of output across the board, the signal value of that output collapses. What rises in value is the thing AI can’t fake over time: a person whose word means something. In a world full of convincing outputs, human reliability is the one credential that still requires a human.

“Gentlemen, it has been a privilege optimizing our sprint velocity with you tonight.”

“Gentlemen, it has been a privilege optimizing our sprint velocity with you tonight.”

What I got wrong two and a half years ago

In my original article, I argued that AI won’t replace software engineers because of accountability, hallucinations, and the need for human judgment. All of that still holds.

But what I missed was the economic layer underneath.

It’s not enough to say “AI won’t replace you.” The real question is: at what price will your skills be valued? Because AI doesn’t need to replace you to reshape your career. It just needs to make your current output cheaper to produce.

The engineers I see thriving right now aren’t the ones who learned the most AI tools. They’re the ones who invested in what AI amplifies rather than replicates:

- Problem framing over answer finding

- Unwritten expertise over theoretical knowledge

- Systems thinking and generalist breadth over siloed specialization

- Specification writing over code writing

- Craft obsession over passive output acceptance

- Encoding experience over keeping it in your head

- Mentoring AI-native juniors over gatekeeping seniority

- Communication fluency over technical fluency

- Authentic trust over polished output

They use AI constantly — but as a multiplier for the things only they can do, not as a substitute for judgment.

I won’t pretend this is easy. The repricing is real, and it’s disorienting. Some of the skills I spent years building are now worth a fraction of what they were.

That stings. 🐝

Now you know where your attention needs to go. So why spend it on panic?

Half the panic in our industry comes from watching YouTube thumbnails with shocked faces and titles like “CODING IS DEAD IN 6 MONTHS.” These are people whose business model is your anxiety. They don’t get paid when you feel confident about your career. They get paid when you click, and fear clicks better than hope.

Stop getting yourself informed from people whose only goal is to grab your attention in order to increase their views. Their incentive is your fear, not your growth.

The reality is far more nuanced than any 12-minute video with dramatic music can capture. And the data backs this up. Gartner predicts that by 2027, half the companies that cut staff for AI will rehire workers to perform similar functions — often under different job titles. Forrester’s data is even sharper: 55% of employers already regret AI-driven layoffs. When their VP of Research asked CEOs who announced “we’re replacing 20% of staff with AI” whether they actually had a mature AI system in place — 9 out of 10 times, the answer was no. They fired the humans who held the context before they had anything to replace them with.

And once you filter out the noise, here’s what keeps me grounded.

The printing press didn’t kill writing. It killed scribing. AI won’t kill software engineering either. It will cheapen the repetitive parts and raise the value of the parts that were engineering all along.

TL;DR:

- The boilerplate gets cheaper. Judgment gets more valuable. Craft matters more.

- Let AI handle more of the repetition

- Keep ownership of taste, judgment, and technical excellence

- Use AI to amplify your craft, not replace your thinking

- The value is shifting from typing to deciding

Remember that list from the beginning? The things nobody taught you, but you just know? That’s what’s worth sharpening.