Anthropic published a 30-page report about the future of software development. Plot twist: the future already started. And honestly? It’s the most fun our craft has had in a decade.

The 2026 Agentic Coding Trends Report reads like a forecast — eight trends that “will define agentic coding in 2026,” case studies from Rakuten and Zapier, careful language about “predictions” and “what we anticipate”.. It’s well-researched. It’s diplomatically written. And what it’s quietly describing is one of the most thrilling creative moments engineering has ever had.

If you’re reading this report and wondering what it actually changes for you day-to-day — or if the word “report” already made your eyes glaze over — that’s exactly what the rest of this article is about.

Popcorn? 🍿

The pipeline we all learned

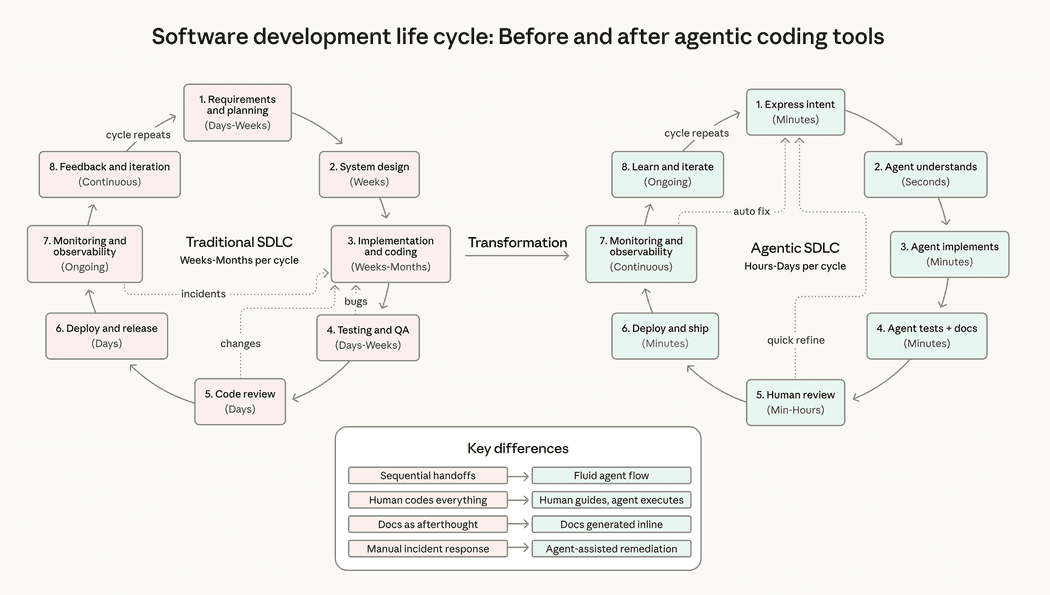

Let’s start with what most of us learned. Whether you studied computer science, did a bootcamp, or picked it up on the job, the software development lifecycle looked something like this:

Requirements -> Design -> Implementation -> Testing -> Deployment -> MaintenanceHumans at every stage.

A product manager writes the spec. An architect draws the boxes. Developers write the code — every line, every function, every test. QA verifies it. Ops deploys it. And when something breaks at 3 AM, a human wakes up and fixes it.

Estimation was based on human throughput. “How many developer-days will this take?” Code review meant one human reading another human’s code, checking for logic errors, style violations, and that one colleague’s inexplicable love for nested ternaries.

The mental model was clear: you are the builder. Your value is your ability to translate requirements into working software with your own hands. The better you are at writing code, the more valuable you are.

This model worked for decades. It shaped how we hire, how we estimate, how we promote, how we organize teams. It’s baked into job descriptions, interview processes, and performance reviews.

And according to Anthropic’s report, it’s also obsolete.

The pipeline that replaced it

Here’s what the Anthropic report actually describes — translated from fluent Corporate into plain English:

Problem definition → Context engineering → Agent orchestration → Validation → DeploymentSo where did all that human work go?

Implementation — the thing most engineers spend most of their day doing — got absorbed by agents. It didn’t disappear. It moved. The human role shifted from writing code to defining the problem precisely enough that an agent can write the code for you.

The report calls this “role transformation: from implementer to orchestrator”. That’s the diplomatic version. The honest version? The thing you spent years getting good at isn’t the bottleneck anymore — which means your judgment, taste, and architectural instincts finally get center stage.

Let’s walk through the new pipeline:

- Problem definition — This is where the real engineering happens now. Vague requirements that humans could “figure out” fall apart quickly when handed to agents. You need to define what to build, why it matters, what constraints apply, and what “done” looks like — with a precision that most teams have never practiced

- Context engineering — You’re not writing prompts. You’re curating the optimal set of information an agent needs at each step. System prompts, tool definitions, examples, retrieval strategies. Context is a finite resource with diminishing returns — every unnecessary token depletes the model’s attention budget

- Agent orchestration — Single agents handling one-shot tasks was 2025. The report predicts multi-agent systems where an orchestrator coordinates specialized sub-agents working in parallel. You’re not managing code anymore — you’re managing a team of AI workers, each with a dedicated context window and a focused specialization

- Validation — This is the new code review, and it’s harder than the old one. Reviewing code you wrote is one thing. Reviewing code an agent wrote — with different patterns, different assumptions, different failure modes — requires a fundamentally different cognitive skill. You need to verify correctness without having built the mental model that comes from writing it yourself

- Deployment — The only stage that looks roughly the same. For now, I guess?

The report’s own diagram shows it: “Traditional SDLC stages remain, but agent-driven implementation, automated testing, and inline documentation collapse cycle time from weeks to hours”. Read that again. From weeks to hours.

The number hiding in plain sight

Buried in the report’s foreword is a statistic that deserves more attention than it’s getting:

Anthropic: Developers use AI in roughly 60% of their work, but report being able to “fully delegate” only 0-20% of tasks.

Most people read this and feel reassured. See? AI can’t do our jobs. We’re still essential.

They’re right — today. But they’re reading the number wrong.

The important thing about 0-20% is not where it is. It’s the direction it’s moving and how fast. Six months ago, that number was closer to zero for most teams. Six months from now, it won’t be 0-20% anymore. The engineers who learned to delegate effectively at 10% will be the ones who know how to delegate at 30%, 50%, 80%.

And here’s the part the report frames delicately: Anthropic’s internal research shows engineers report a “net decrease in time spent per task category, but a much larger net increase in output volume”. Translation: you’re not doing the same work faster. You’re doing dramatically more work in the same time. That’s not a productivity improvement — that’s a redefinition of what “engineer output” means.

The report adds that 27% of AI-assisted work consists of tasks that “wouldn’t have been done otherwise” — scaling projects, building nice-to-have tools, fixing minor issues that were permanently deprioritized. When agents make trivial work free, the bar for what counts as “too small to bother with” drops to zero. Everything becomes worth doing. And the engineers who can orchestrate that expanded scope will look, from the outside, like they’re doing the work of three people.

Because they are.

Reading between the corporate lines

Anthropic is a company that sells AI to developers. Their report is, in part, a sales document. But it’s a surprisingly honest one — if you know how to read corporate language.

Let me translate a few of their diplomatic phrases:

“Human-AI collaboration patterns” — Your job description is changing. The report describes engineers who “develop intuitions for AI delegation over time” and who “delegate tasks that are easily verifiable” while keeping “anything requiring organizational context or ‘taste’ for themselves”. That’s not collaboration. That’s a manager-subordinate relationship with an AI. You’re the manager now. Own it.

“Agentic coding expands to new surfaces and users” — Non-engineers are building software. The report highlights Zapier achieving 89% AI adoption across the entire organization, with design teams prototyping during customer interviews in real-time. Anthropic’s own legal team built self-service tools with Claude Code — no engineering required. The old “I can write code and you can’t” moat is opening up — which means way more people get to build alongside you.

“Multi-agent coordination” — You’re not debugging functions. You’re orchestrating systems of agents, each with their own context window, their own specialization, their own failure modes. Fountain, a workforce management platform mentioned in the report, uses a hierarchical multi-agent architecture where a central orchestrator coordinates sub-agents for screening, document generation, and sentiment analysis. That’s not coding. That’s systems design with AI as the substrate.

“Expedited onboarding to dynamic project staffing” — The report buries a bombshell in dry language: onboarding timelines are “collapsing from weeks to hours”. One Augment Code customer finished a project estimated at 4-8 months in two weeks. When agents can onboard to a codebase instantly, the premium you command for “knowing the codebase” drops to zero. Your value shifts to knowing what the codebase should become.

What to learn now that AI writes the code?

So what do you do about this? The report offers hints, but let me be more direct.

Context engineering is the new core skill. Forget prompt engineering — that was the 2024 version. Context engineering is about designing the entire information environment an agent operates in: system prompts, tool definitions, retrieval strategies, example selection, memory management. Every token counts. Every unnecessary piece of context degrades performance. The engineers who understand this build agents that actually work. The rest of us catch up fast once we see it click.

Skills are becoming infrastructure. One of the report’s less-discussed trends is how organizational knowledge is being encoded into reusable skill files that agents call hundreds of times per run. A real estate firm in the report accumulated over 50,000 lines of skills across 50 repositories — covering everything from rent roll standardization to investor handoff protocols. Those skills aren’t just AI tools. They’re the operational documentation for the entire firm. When a new analyst joins, they don’t get a training manual — they get access to the skills library. This is where AI tooling crosses into organizational knowledge management.

Think in orchestration patterns. The specialist stack pattern — where each skill handles one step and the agent becomes an orchestrator, not an expert — is becoming standard. One agent generates a PRD from a brief. Another converts PRDs to GitHub issues. Another writes test cases from issues. The agent doesn’t need domain expertise because the expertise lives in the skill files. Your job is designing that stack, not writing the code inside it.

Validation is a craft. The report’s own engineers admit: “I’m primarily using AI in cases where I know what the answer should be or should look like. I developed that ability by doing software engineering ‘the hard way.‘” Catching what agents get wrong is harder than writing it yourself — because you don’t have the mental model that comes from having written it. This is the skills paradox the Gartner report identified: juniors need implementation experience to develop the judgment that seniors use to validate AI output. Keep the junior pipeline healthy — those are the engineers who’ll develop the instincts your team needs to validate what agents produce.

Job market is following the trend

It feels like engineers who use AI are replacing engineers who don’t.

The Anthropic report’s case studies tell the same story from different angles. Rakuten’s engineers tested Claude Code on a complex task in vLLM — a library with 12.5 million lines of code — and the agent finished the implementation in seven hours of autonomous work with 99.9% numerical accuracy. CRED doubled their execution speed across their entire development lifecycle. TELUS shipped engineering code 30% faster while creating over 13,000 custom AI solutions.

These aren’t experiments. These are production systems at scale. And every one of them shows the same pattern: teams that adopted early compounded their advantage. They developed delegation intuitions. They built orchestration patterns. They learned what agents are good at and what still needs human judgment. And now they’re operating at a velocity that late adopters can’t match by simply “trying harder”.

The gap between early adopters and late movers is widening. The report says it. The market confirms it. And the engineers in the middle — the ones who “use Copilot sometimes” but haven’t fundamentally rethought how they work — have the most to gain from stepping all the way in. A few weeks of intentional practice is the whole difference between feeling productive and actually leading.

The development lifecycle you learned is already gone. The report Anthropic published isn’t a warning about the future. 2026 is the year LLMs can really do magic. And it’s already happening.

The question isn’t whether you’ll work with AI. It’s how soon you start leading it.